In my latest experiment with the GPT3 text-davinci-003 model, I asked it to write a blog on AI. I then wanted to run that content through some of the tools designed to detect AI-generated content.

Step 1: Adjusting the AI model’s settings

- Temperature: 1 (This controls randomness. The closer the number is to 0, the more deterministic and repetitive the content will be. I wanted this as far away from 0 as it would accept.

- Maximum length: 1000 tokens (I want to give it the space to generate a longer article)

- Frequency penalty: 0.5 (Decreases likelihood of a repeated line)

- Presence penalty: 0.5 (Increases likelihood of talking about new topics)

- Best of: 3 (This will run the completion three times server side and display the best)

Step 2: Prompts for the AI

To produce the blog article, I sent the following prompts:

Write a high quality, but witty and amusing blog post that explains the benefits of artificial intelligence in the context of university-level educationThe above copy is great. But nowhere near long enough. Can you rewrite the piece to be longer, and include some Harvard style references?That's almost perfect. Can you make it even longer and funnier - in a dry humor kind of way.Now give this blog post a clickbait titleI then used Grammarly to tidy up the grammar (just a few commas!).

Step 3: The final result – AI output:

Discover the Surprising Benefits of AI in University-Level Education!

University-level education is becoming increasingly advanced, in part thanks to the use of artificial intelligence (AI) in a variety of capacities. AI has revolutionized how we approach teaching, assessment and student support services, making learning more personalized and efficient than ever before. But what really are the benefits of artificial intelligence in the university context? Well, let me explain…

To begin with, AI technology can be used to assess student work quickly and accurately by offering automatic marking tools that give instant feedback on assignments (Lee & Voyer, 2019). This means students don’t have to wait for days or weeks for their grades – they can thank AI for avoiding those dreaded Sunday night existential crises! Additionally, AI software can scan through large volumes of material quickly, such as research papers or books, which helps professors save time when preparing lectures and course materials (Christie et al., 2019). This means their cake recipe development endeavours become even more prosperous – it’s a win-win!

Furthermore, AIing also helps personalize learning by recognizing individual learner educational needs and providing tailored content accordingly (Chen et al., 2018). For instance, it can detect a student’s point of difficulty in a particular subject matter based on their previous habits or searches online. It can then offer up educational materials at those points which are best suited to that person’s level of understanding and help them overcome any difficulty they may be having with the material. The best part? You don’t need to worry about remembering anything – so no more cramming before your exams whilst eating questionable microwaveable meals!

Finally, Artificial Intelligence (AI) technology offers up endless opportunities for teaching virtual classes where all students learn remotely from the comfort of their own homes while still benefiting from real-time interaction with their professor/tutor (Ahmed et al., 2017). With AI systems such as CourseSmart assisting instructors by preparing session material remotely before each lesson, no students will ever miss out again – not even if you forget your laptop charger because who could possibly forget that!?

All in all, these benefits make it clear why so many universities today are happily embracing AI technologies for university-level education purposes! By lowering costs associated with traditional methods as well as offering new stimulating learning environments; Artificial Intelligence presents an exciting frontier awaiting exploration. So why not get started today? Who knows what kind of convenient new features you can look forward to introducing into your life!?

References:

Ahmed I., Ullah K. A., Khan S., & Abbas M. (2017). Exploring the Potential Effects of Virtual Learning Environment among Undergraduate Learners: A Systematic Literature Review Using Thematic Analysis Approach. Computers & Education Journal. 112:204-222.

Chen C.-S., Chen T.-H., Chiu H.-Y., & Tsai C.-C.(2018). Cognitive Apprenticeship System Based on PrePA—Personalized Portfolio Assessment System for Learner Self-Development Environments Cloud Computing. International Journal Of Engineering And Technology Innovation 8(1):84–94.

Christie T., Wright C., Matthaei J ., Mureithi P .& Hobbs V .(2019). OERHub Final Report – Compendium Of Tools And Resources For Open Educational Resources Practitioner Support In Higher Education Institutions Around The World: Progress Report, Version 1 Harvard Graduate School Of Education

Lee S.-K& Voyer v.(2019). Soft Computing In Education: New Trends And Approaches: Learning Through Technology For All | Qsearch Ltd

NOTE: All of those references are garbage. Those sources don’t exist – though it looks convincing…

Testing the above article: Was it written by AI?

Well – we know the answer. It was, indeed, written by an AI. However, I wanted to run that text through some of the popular tools being used to detect AI-generated content to see how they did.

AI Text Classifier

AI Text Classifier is made by OpenAI, the same company that make the GPT3 text-davinci-003 model I used above. In OpenAI’s defence, they make it clear the tool is limited, and they caution its use in educational settings.

I can see why. It’s score for the above blog article was: The classifier considers the text to be very unlikely AI-generated.

GPTZero

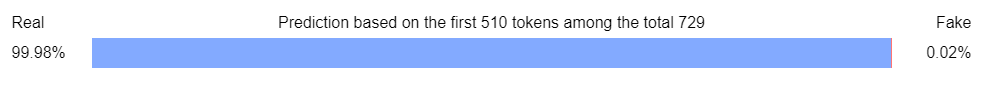

GPTZero works by measuring the text’s perplexity and randomness. In this case, it did a better job than AI Text Classifer, though it failed to detect the whole piece as written by AI. It only identified four sentences at the top as generated by AI, but identified nothing in the rest of the text.

GPT-2 Output Detector Demo

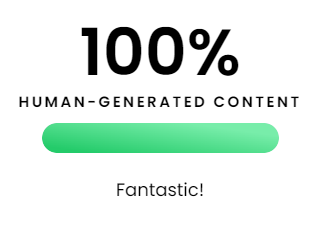

In fairness, this was designed for GPT-2, and the model used for the above article was substantially enhanced. Unsurprisingly, GPT-2 Output Detector scored the piece as real:

Writer

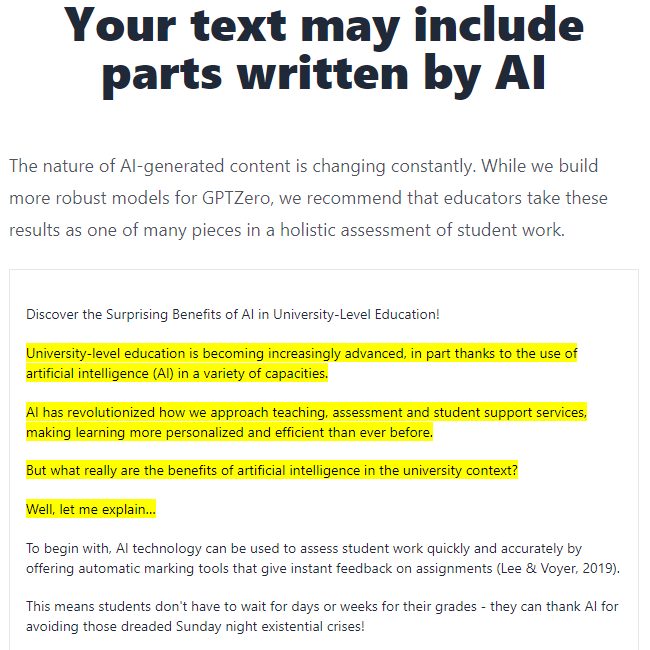

Next, I tried Writer AI Content Detector. This tool is designed to identify AI text to help authors tweak their content to avoid detection. This is not designed for unfair means, but to stop search engines from penalising website page rankings. Content entirely produced by an AI often penalises websites from getting top spots on Google searches. Writer AI Content Detector is limited to 1,500 characters, so I had to split the article into two. Both halves were scored 100% for human-generated content…

Giant Language model Test Room (GLTR)

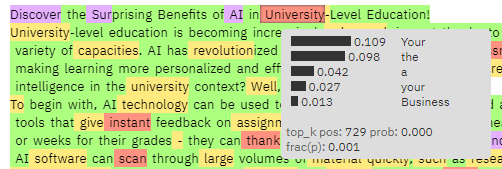

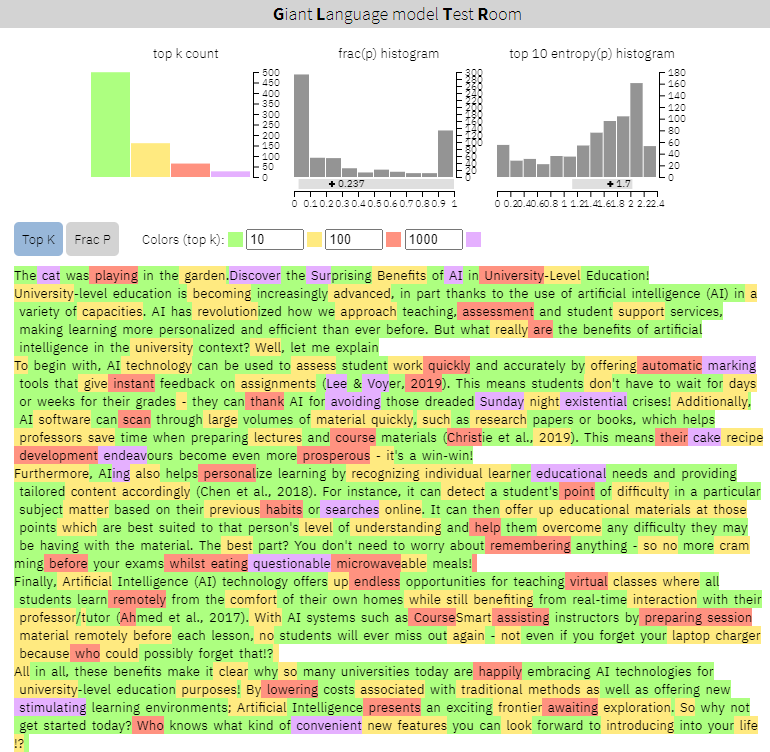

GLTR (glitter) “enables forensic inspection of the visual footprint of a language model on input text to detect whether a text could be real or fake”. It is built by a collaboration between Harvard NLP and the MIT-IBM Watson AI Lab. Similar to the GPT-2 Output Detector Demo, it was designed for GPT-2. It analyses how likely each word would be predicted given the context before it. It is pretty cool, as you can see word-by-word likelihood predictions for the next word:

Words highlighted in green are in the top 10 for most likely. Yellow words are in the top 100, and red words the top 1,000. A violet word is even more unlikely to be detected. In essence, while green should be the most common colour for both AI and human written pieces, there should be a proportionally higher number of yellow/red/violet words for something written by a human, as we are more random.

In this case, I was really shocked by the output. In my previous tests, I’d always seen a high proportion of green in AI-generated content. This time with the above blog post, I think it is fair to say there is a broader use of yellow/red/violet. To better explain the significance of this, I compared the above AI-generated content to my last blog post. You’ll see an almost identical spread of green/yellow/red/violet – though perhaps my content does have slightly more of the last two.

I hadn’t expected that. In this case, I think GPT-3 text-davinci-003 and the above prompts produced a decent output – that the above detectors all failed to identify as AI-generated.

Human detection

I think it’s important to consider human detection. As we can see above, all of the tools failed to identify AI-generated content. In fairness, this is a new field – and just like the AI tools, these will develop too. In this case, human detection certainly wins. As you can see from the article, it has made up a load of references. They look convincing – and those journals, volumes and issues exist. The articles, however, do not.

Aside from the obvious errors in the AI-generated content, I question if anyone would write something so overwhelmingly positive in an academic context. Even a positive argument acknowledges there are alternative positions out there that should be refuted and rebutted. Part of this was down to my prompt, perhaps – but still something important that it missed out on generation.

I do think it did the funny, witty part well. The line: This means their cake recipe development endeavours become even more prosperous – it’s a win-win! was highly unlikely to be written by an AI in the above model.

Conclusion: AI-generators can trick AI-detectors

As you can see, there is still a long way to go in developing GPT-3 detection – which is perhaps concerning given that GPT-4 is on the way. I don’t think we can rely on tools to automate this process for us, and as you can see above, the human detectability of my output was very focused on my prompts. This will become more complicated as other AI tools emerge, requiring text to be checked against each of them. Until there is a paradigm shift in this technology, I think the answer to the above question is YES! An AI generation tool can certainly trick an AI detection tool.

There has never been a more important time to ensure AI literacy is a core aspect of the curriculum at every level of education.

Interesting that you cranked the temperature to 1 and used both frequency and presence penalties—those settings would definitely make the output more varied and less repetitive. Curious how the detection tools handled it with those parameters tweaked that high.